Security ratings and vendor certifications measure a supplier's general posture, not how that supplier is connected to your specific environment. Using real discovery findings, this article makes the case for moving from generic vendor scores to context-based supplier intelligence signals, grounded in the concept of Digital Proximity: a measure of how deeply a supplier is technically embedded in your environment, not whether they passed a certification.

When an incident happens, be it a data breach, a ransomware infection that pivots through a third party, or an outage traced to a compromised supplier, a post-mortem begins. Someone pulls up the vendor risk report and finds that the supplier in question has a B+ from a major security ratings platform. Their SOC 2 certificate was renewed three months ago. Their self-assessed questionnaire was filed on time.

But what exactly were you measuring?

This is the structural failure at the heart of how organizations currently approach third-party risk. The tools we rely on to tell us whether a supplier is safe are measuring something real, but they are not measuring the right thing. That difference is where breaches live.

The Rating Is Not About Your Relationship

Security ratings platforms work by observing publicly visible signals from a supplier's internet-facing infrastructure and generating a score.

It doesn’t look at how deeply embedded the supplier is in your environment, which systems they connect to, which scripts they serve on your applications, or how much of your data flows through them.

Think about what that means in practice. Imagine you have two customers using the same cloud-based e-learning platform. Customer A has a single subdomain pointing to the vendor's login page; one DNS record, no data exchange beyond user credentials. Customer B has embedded the vendor's JavaScript library across their entire application portfolio, shares API credentials for automated provisioning, and has a direct database integration for compliance reporting.

The security rating both customers receive for that vendor is identical. The level of risk is not even close.

When we built ThingsRecon, this was the central problem we set out to solve. Generic scoring evaluates a supplier as an independent entity. What security teams actually need to understand is the supplier in the context of their own digital environment.

- How is this supplier connected to us?

- What is the blast radius if something goes wrong with them?

- Which of our assets depend on their infrastructure?

That is not a question a security rating can answer. It requires mapping the actual digital connections.

What Deep Discovery Actually Finds

When we run a discovery assessment on an organization's external surface, we do not start with a list of contracted vendors and score them. We start from the organization's own domains and work outward, crawling headers, reading scripts, analyzing DNS records, following CDN references, tracing API endpoints. We are reconstructing what an attacker would discover if they spent a serious amount of time mapping the target before deciding how to move.

What we find is consistently surprising:

Neither of these risks showed up in any security rating. Neither was captured by any questionnaire. Both were found in under an hour of passive, non-invasive scanning.

The Certification Paradox

There is a specific frustration that GRC professionals experience with increasing frequency: scanning a supplier that has recently renewed its ISO 27001 certification or received a clean SOC 2 Type II report, and finding dozens of outdated software components, expired certificates, and misconfigured security headers on the parts of their infrastructure that connect directly to the client's environment.

This is not a failure of the certification process, or at least not entirely. Certifications assess what an organization has in place at the time of the audit, against a set of defined controls. They cannot and do not assess whether those controls are working in the specific context of every customer relationship the vendor has. They cannot tell you whether the component serving scripts to your application is the same one covered by the SOC 2 scope.

Evidence-based risk assessments, where the evidence is technical, current, and context-specific, is the only method that closes this gap.

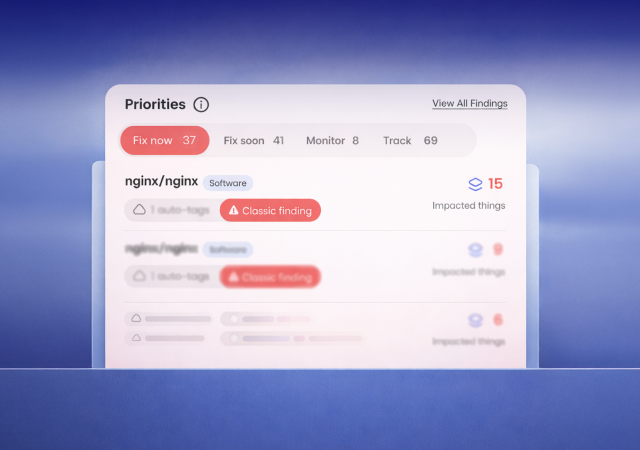

From Score to Signal

The shift we are advocating for is a move from generic vendor scores to context-based supplier intelligence signals. A signal is a live technical observation tied to a specific digital connection. It is evidence that tells you which part of which supplier's infrastructure is connecting to which part of your environment, what state that infrastructure is in today, and what the contextual risk is given the nature of the connection.

This requires a fundamentally different technical approach. It requires discovering the digital supply chain from the inside out, starting from your own surface, rather than scoring vendors from a list. It requires understanding the difference between a supplier that appears in a DNS record and one that is serving JavaScript to thousands of your users. It requires what we call Digital Proximity: a measure of how deeply embedded a supplier is in your digital environment, not just whether they passed an audit.

Security ratings are not going away, neither are vendor questionnaires. But if your supply chain risk program relies on them exclusively, you are measuring confidence, not risk.

What Security Leaders Should Ask Next

The framework most organizations are still using was built for a different era, when vendor relationships were fewer and easier to document.

Do you know every supplier that is currently serving code, scripts, or data connections to your production applications? Not the contracted vendors list. The live digital connections.

If you cannot answer that question with certainty, you have a shadow supply chain and you have no visibility over its risk posture. Fortunately, the tools and the methodology to map, score, and continuously monitor the actual digital supply chain exist.

.png)

.png)